NVIDIA’s Blackwell architecture was unveiled at GTC 2024, and the first commercial systems started reaching select hyperscalers in late 2024. By September 2025 there are enough real deployments, enough public measurements, and enough operational experience to evaluate what actually changes for large-model training. This review focuses on GB200 NVL72, where Blackwell’s logic is expressed fully, rather than standalone B200 or B100 variants.

The core idea: the rack as unit

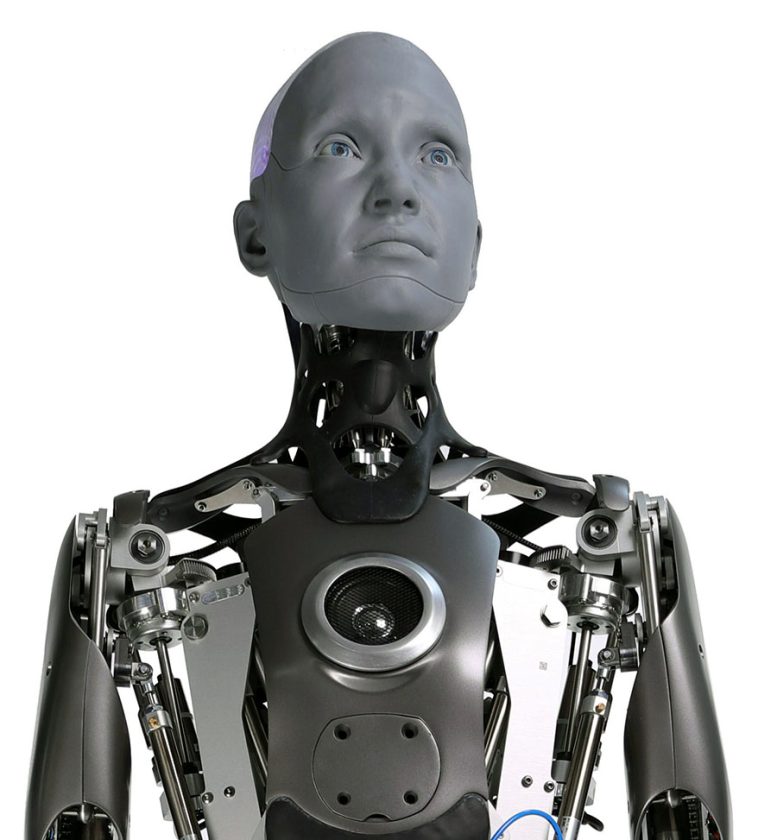

The most distinctive thing about Blackwell is not the GPU itself, even though the leap over Hopper is substantial. It is that NVIDIA has stopped designing GPUs and started designing racks. GB200 NVL72 integrates 72 Blackwell GPUs and 36 Grace CPUs in a single roughly 120-kilowatt cabinet, interconnected by fifth-generation NVLink at 1.8 terabytes per second. To the software, the rack behaves as a single machine with 13.5 terabytes of unified HBM3e memory.

This is a significant conceptual shift. With Hopper and earlier generations, model parallelism required partitioning the model across GPUs and explicitly managing inter-GPU communication through NCCL. With GB200 NVL72, 72 GPUs can access each other’s memory as if local, albeit with higher latency. This simplifies training patterns where communication between partitions is intense, such as tensor parallelism or mixture-of-experts models.

What the industry is measuring for real performance

NVIDIA’s synthetic numbers point to 4x training throughput over H100 at the same precision, and up to 30x inference speedup with mixed FP4. In practice, early customers report more moderate but still significant gains. Meta and Microsoft published MLPerf Training v5.0 results during summer 2025 showing GB200 NVL72 training Llama 3 70B between 2.2x and 2.8x faster than equivalent H100 per GPU at the same power budget.

The gap between theoretical 4x and real 2.5x is interesting. Part comes from synthetic benchmarks assuming FP8 or FP4 precision, while real training still uses a lot of BF16 for stability. Part comes from NVIDIA’s Blackwell software stack, particularly cuDNN 9 and the latest TransformerEngine versions, still maturing. Next releases should close the gap toward 3x and beyond.

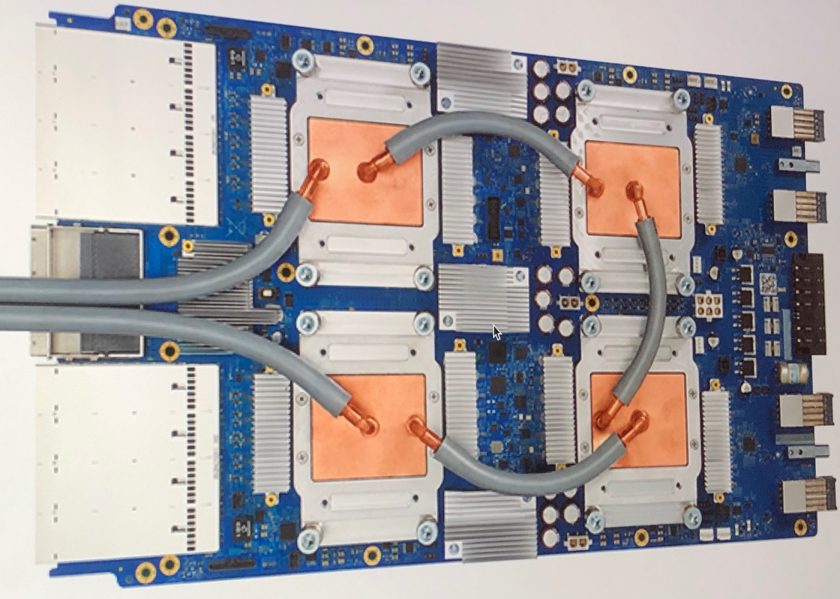

The other relevant number is power. A Blackwell B200 draws 1000 watts versus 700 for H100. A full GB200 NVL72 rack sits at 120 kilowatts, about 10x a traditional general-purpose server rack. This forces direct liquid cooling at the chip, something that was niche in 2023 and has become the default for AI data centers by 2025.

FP4 and quantization

One of Blackwell’s technical novelties is native FP4 support, a 4-bit-per-element precision absent in Hopper. FP4 is not useful for training, where gradients need more precision to converge, but it is very useful for inference on large models. A model trained in BF16 and quantized to FP4 occupies a quarter of the space, uses a quarter of the memory bandwidth, and runs much faster if hardware supports it natively.

The catch is that not all models quantize well at 4 bits. For large dense transformers like Llama or Mistral, FP4 with careful calibration loses between 0.5 and 2 points on standard benchmarks. For mixture-of-experts models or tasks sensitive to numerical precision, the drop is larger. The current recommendation is FP8 for high-quality inference and FP4 where cost matters more than the last points of quality.

This has a practical implication: Blackwell is more attractive to operators with massive inference workloads than to operators who only train. A data center serving millions of queries per second benefits more from FP4 than one training a new model every two months. Hyperscalers have both workloads and can amortize the hardware across them; academic centers or startups tend to lean more toward training.

Software: still evolving

The software ecosystem has two levels. At the low level, CUDA 12.5 and cuDNN 9 already include stable support, as does NCCL 2.22. At the high level, popular frameworks lag behind. PyTorch 2.5, released in October 2024, added initial Blackwell support, but real optimizations landed in 2.6 in January 2025. JAX followed a similar path with experimental support in 0.4.35 and stable in 0.5.

The practical consequence is that buying or renting Blackwell in Q1 2025 meant fighting with immature software. By September 2025 the ecosystem is mature for standard model training, but edge cases remain. Very large mixture-of-experts training, certain pipeline-parallel patterns, or custom kernels compiled with Triton can still show performance or correctness issues. Serious teams keep a parallel Hopper environment for cross-checks.

Price, availability, and alternatives

In September 2025, a GB200 NVL72 rack costs around 3 million dollars for a direct buyer, with 9-month lead times except for preferred large customers. This means that during 2025 and much of 2026, Blackwell access is essentially via cloud: CoreWeave, Lambda, Crusoe, Azure, AWS, and Google Cloud offer instances at 6 to 12 dollars per GPU-hour depending on region and commitment.

Real alternatives are few. AMD Instinct MI300X has gained share in 2025 with solid performance and better memory-per-dollar, but the ROCm ecosystem still trails CUDA and serious teams treat it as a second choice. Google TPU v5p accelerators remain competitive but only available inside Google Cloud. Intel Gaudi 3 has fallen to a distant third.

When it pays off

For most companies training their own models, Blackwell only makes sense if training time is the dominant bottleneck. If a training iteration takes a week on H100 and two days on Blackwell, the extra cost may be justified. If it takes three days on H100 and one on Blackwell, the math is less clear because human time preparing data and analyzing results is usually longer than training itself.

For inference, it depends on volume. At low volume, Hopper remains cheaper per query because Blackwell is overprovisioned. At high volume, especially with FP4 quantization, Blackwell can cut cost per query by a factor of 3 to 5. The crossover sits between 10% and 30% of sustained utilization depending on model type.

My take

Blackwell marks a phase change in AI infrastructure worth understanding even if you never touch one of these racks directly. When NVIDIA designs the product as a rack rather than a GPU, it is nudging the industry toward a model where the purchase and operations unit is the full cabinet, with liquid cooling and 120 kilowatts of density. That changes data center requirements, operations-team profiles, and hardware-software co-design decisions.

For application builders, the relevant part is that the largest models will be available faster and cheaper via API. Frontier training cost keeps growing, but unit query cost keeps falling. That favors strategies consuming third-party models through APIs over strategies training bespoke models. Only cases where the model itself is the competitive edge, or where data cannot leave the organization, justify training.

For operators of traditional infrastructure, Blackwell is an invitation to think about which parts of the data-center stack still belong to the general-purpose future and which belong to specialized AI workloads. Over the next five years data centers will likely split into two radically different profiles, and mixing them in the same building will stop making sense.